Building Your First Skill: From YAML to Working Workflow

- Stephen Jones

- Ai

- March 24, 2026

Table of Contents

Welcome back to the series. In Part 1, we covered what Claude Code skills are, why they matter, and how they transform Claude from a general-purpose assistant into a specialist that knows your workflows. Now it’s time to build one.

This is the hands-on tutorial. By the end of this post, you’ll have a working skill, understand every configuration option available to you, and know how to test and iterate until it’s rock solid.

Let’s get into it.

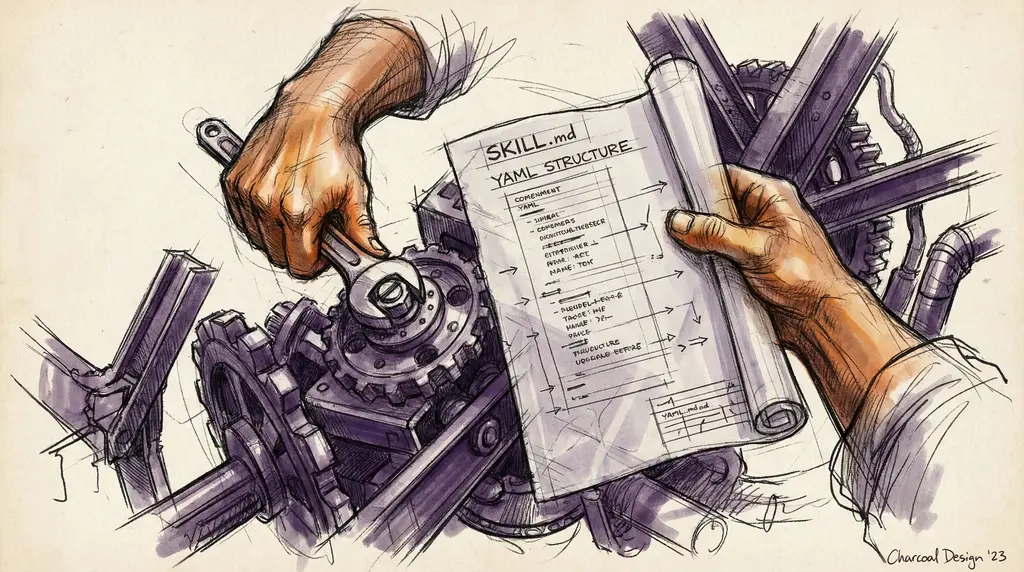

Technical Requirements

Before writing a single line, you need to know the file structure rules. Get these wrong and Claude will silently ignore your skill.

Folder naming: Must be kebab-case. notion-project-setup works. NotionProjectSetup or notion_project_setup won’t.

The skill file: Must be named exactly SKILL.md, case-sensitive. skill.md, Skill.md, and Skills.md will all be ignored. Don’t put a README.md inside your skill folder either.

Where skills live:

- Personal skills (available everywhere you use Claude Code):

~/.claude/skills/<skill-name>/SKILL.md - Project skills (available only in that repo):

.claude/skills/<skill-name>/SKILL.md

That’s it. One folder, one file. The simplicity is intentional: it keeps skills portable and easy to share.

The YAML Frontmatter

The frontmatter is the control panel for your skill. It tells Claude when to activate it, what tools it can use, and how it should run. Here’s the complete field reference:

---

name: my-skill-name

description: >

What this skill does and when to use it. Claude uses this

to decide when to apply the skill automatically.

argument-hint: [issue-number]

disable-model-invocation: true

user-invocable: true

allowed-tools: Read, Grep, Glob

model: claude-sonnet-4-20250514

context: fork

agent: Explore

---

Let me walk through each field:

| Field | Required | Purpose |

|---|---|---|

name | No (uses dir name) | Display name, kebab-case, max 64 chars |

description | Recommended | Tells Claude when to trigger (max 1024 chars) |

argument-hint | No | Autocomplete hint (e.g., [issue-number]) |

disable-model-invocation | No | true = manual-only via /name |

user-invocable | No | false = hidden from menu, Claude-only |

allowed-tools | No | Tools allowed without permission prompts |

model | No | Override model for this skill |

context | No | fork = runs in subagent |

agent | No | Subagent type when forked |

A few things worth highlighting. The description field is doing the heavy lifting: it’s what Claude reads to decide whether your skill is relevant to a given prompt. We’ll cover how to write great descriptions in the next section.

The allowed-tools field is a quality-of-life feature. Without it, Claude will ask for permission every time it wants to use a tool. List the tools your skill needs and they’ll run without prompts.

Setting context: fork runs your skill in a subagent, an isolated context that won’t pollute your main conversation. This is ideal for skills that do a lot of exploratory work.

Security restrictions to keep in mind:

- Name cannot contain “anthropic” or “claude”

- No XML tags in name or description

- Description cannot contain angle brackets

Invocation control matrix:

This is how disable-model-invocation and user-invocable interact:

| Settings | User Can Invoke | Claude Can Invoke |

|---|---|---|

| (default) | Yes | Yes |

disable-model-invocation: true | Yes | No |

user-invocable: false | No | Yes |

The default is usually what you want. Use disable-model-invocation: true for skills that are expensive or destructive: things you only want to run when you explicitly ask for them. Use user-invocable: false for background skills that Claude should apply automatically but that don’t make sense as standalone commands.

Writing Killer Descriptions

The description is the single most important field in your frontmatter. A bad description means your skill either never triggers or triggers on everything.

The formula: What it does + When to use it + Key capabilities.

Here are examples of what not to do:

# BAD: Too vague. Claude can't decide when to trigger

description: Helps with code

# BAD: Too technical. Describes implementation, not use case

description: Queries /api/v2/sprint endpoint with OAuth2 bearer token

# BAD: Too broad. Will trigger on everything

description: Assists with any development task

And here’s what good looks like:

# GOOD: Clear trigger, specific scope

description: >

Generates weekly sprint reports by pulling data from Jira and GitHub.

Use when preparing for sprint review meetings or stakeholder updates.

Produces formatted Markdown with velocity charts and blocker summaries.

# GOOD: Specific domain, clear when-to-use

description: >

Reviews pull requests for security vulnerabilities using OWASP Top 10.

Use when reviewing PRs that touch authentication, authorization, or

data handling code. Checks for SQL injection, XSS, and CSRF patterns.

# GOOD: MCP-enhanced, mentions tools

description: >

Processes customer onboarding by coordinating across Salesforce, Slack,

and Google Workspace via their MCP servers. Use when a new customer

signs their contract and needs account setup, welcome messages, and

shared drive provisioning.

Notice the pattern. Each good description tells Claude: here’s the domain, here’s when the user would need this, and here’s what I produce. That’s what lets Claude make smart triggering decisions.

Writing the Main Instructions

Everything after the frontmatter closing --- is your skill’s instructions. This is the playbook Claude follows when the skill activates.

Here’s a template structure that works well:

## Overview

Brief description of what this skill does and the problem it solves.

## When to Use

- Specific trigger scenarios

- What the user might say

## Steps

1. First action with specific tool references

2. Second action with expected format

3. Validation step

## Output Format

Describe the expected output structure.

## Error Handling

- If [tool] fails: [fallback action]

- If [data] is missing: [ask user or skip]

## Examples

### Good Output

[Show what success looks like]

### Common Mistakes

[Show what to avoid]

The key principle: be specific and actionable. Compare these two approaches:

# BAD: Vague

Review the code and suggest improvements.

# GOOD: Specific and actionable

1. Use the Read tool to load all changed files from the PR

2. Check each file against these criteria:

- No hardcoded secrets (API keys, passwords, tokens)

- All public functions have error handling

- SQL queries use parameterized statements

3. For each issue found, output:

- File path and line number

- Severity: CRITICAL / WARNING / INFO

- Specific fix recommendation with code example

The vague version leaves Claude guessing. The specific version gives it a checklist it can execute reliably every time.

Best practices for instructions:

- Keep SKILL.md under 500 lines. Claude skims long blocks. Put critical instructions at the top.

- Use bullet points over paragraphs. They’re easier to parse.

- Reference tools by name (“Use the

Readtool to load…”) so Claude knows exactly what to reach for. - Include error handling for common failures. What should Claude do if an API call fails or data is missing?

- Use progressive disclosure: keep the core instructions in SKILL.md and move detailed reference docs to a

reference/subfolder.

Testing Your Skill

You’ve written the skill. Now you need to verify it actually works. There are three approaches, and I’d recommend starting with the first and working your way up.

Manual Testing (Claude.ai):

Upload your skill as a zip file, run test queries, and observe behavior. This is the fastest way to iterate. No setup required, just drag and drop.

Scripted Testing (Claude Code):

Use the skill-creator tool’s Eval mode. Define test prompts and expected behaviors, then run repeatable validation across changes. Great for catching regressions.

Programmatic Testing (API):

Build evaluation suites via the Messages API. Use the skill-creator’s Benchmark mode for standardized assessment.

Regardless of which approach you use, test across three areas:

Trigger Tests

Does the skill activate when it should, and stay quiet when it shouldn’t?

Should trigger:

"Plan the next sprint"

"What's our sprint velocity?"

"Prepare for sprint review"

Should NOT trigger:

"Write a Python function"

"Summarise this document"

"Help me debug this error"

Functional Tests

Does the skill produce correct, complete output?

Given: A Jira board with 15 open issues and 3 blocked

When: User says "Generate sprint report"

Then:

- Report includes all 15 issues with status

- Blocked items highlighted at top

- Velocity chart covers last 3 sprints

- Output is valid Markdown

Baseline Comparison

Is the skill actually better than using Claude without it?

Without skill: Generic response, misses Jira integration,

no consistent format, ~2 minutes of follow-up prompts

With skill: Structured report, pulls live data, consistent format,

single prompt to completion

If your skill isn’t meaningfully better than the baseline, it needs more work, or it might not be worth building as a skill at all.

Using the skill-creator Tool

The skill-creator is your development companion. It has four modes:

- Create: Describe what you want, and it drafts the initial SKILL.md along with test cases. Great for getting past the blank page.

- Eval: Define test prompts with expected behaviors and validate that your skill holds up across all of them.

- Improve: Analyzes your description against sample prompts and suggests edits for better triggering accuracy.

- Benchmark: Runs a standardized assessment using your evals, giving you a score to track over time.

Here’s a development pattern that works really well: the Claude A / Claude B approach:

- Claude A is your development partner. Work with it to create and refine the skill.

- Claude B is a fresh instance with the skill loaded. Test with Claude B on real tasks.

- Observe Claude B’s behavior. Does it trigger correctly? Is the output right? Does it handle edge cases?

- Bring those observations back to Claude A and iterate.

The key insight is that you iterate based on observed behavior, not assumptions. What you think Claude will do with your instructions and what it actually does are often different. Testing with a fresh instance catches those gaps.

Iteration Based on Feedback

Once your skill is in use, you’ll hit common issues. Here’s a troubleshooting guide:

Undertriggering: the skill doesn’t activate when it should:

- Your description is too narrow or missing keywords the user naturally reaches for.

- Fix: Add more trigger phrases and scenarios to the description.

- Fix: Verify the skill is visible by asking Claude “What skills are available?”

Overtriggering: the skill activates when it shouldn’t:

- Your description is too broad and matches on common terms.

- Fix: Add negative triggers in your instructions (“Do NOT use this skill for simple data exploration”).

- Fix: Set

disable-model-invocation: trueto make it manual-only while you refine the description.

Execution issues: the skill triggers but produces bad output:

- Instructions are too verbose and Claude is skimming past critical steps.

- Fix: Keep SKILL.md under 500 lines.

- Fix: Put the most important instructions at the top of the file.

- Fix: Use bullet points and numbered lists. Avoid dense paragraphs.

What’s Next

You now have everything you need to build, test, and iterate on a Claude Code skill. The fundamentals are straightforward: a well-named folder, a SKILL.md with clear frontmatter, specific instructions, and a testing loop.

In Part 3, we’ll level up: five proven skill patterns for real-world workflows, distribution strategies for sharing skills across your team, and a complete troubleshooting guide for when things go wrong.