CloudWatch Logs Just Got an HTTP Endpoint. That Changes More Than You Think.

- Stephen Jones

- Aws

- March 17, 2026

Table of Contents

Every time I set up log shipping from a non-AWS source to CloudWatch, the same friction shows up. Install an agent. Configure IAM credentials. Implement SigV4 signing. Manage rotation. It works, but it is a lot of ceremony for “send this text to that place.”

AWS quietly changed that. CloudWatch Logs now has an HTTP Log Collector (HLC) endpoint that accepts plain HTTP POST requests with bearer token authentication. No SDK. No SigV4. No agent. Just a curl command and a token.

It is still in preview and only available in four US regions. But the architectural implications are worth paying attention to now.

What Changed

CloudWatch Logs has always accepted data over HTTPS, but through the PutLogEvents API, which requires AWS Signature Version 4 request signing. SigV4 is secure, but implementing it from scratch on constrained devices or legacy systems is genuinely painful. You need to compute canonical request hashes, derive signing keys, handle clock skew, and manage credential rotation.

The new HLC endpoint simplifies this to:

curl -X POST \

'https://logs.us-east-1.amazonaws.com/services/collector/event?logGroup=/my-app/logs&logStream=stream-1' \

-H "Authorization: Bearer YOUR_BEARER_TOKEN" \

-H "Content-Type: application/json" \

-d '{"event":[{"time":1730141374.001,"event":"User login successful","host":"app-01","severity":"info"}]}'

That is it. Any system that can make an HTTP POST with a header can now ship logs to CloudWatch.

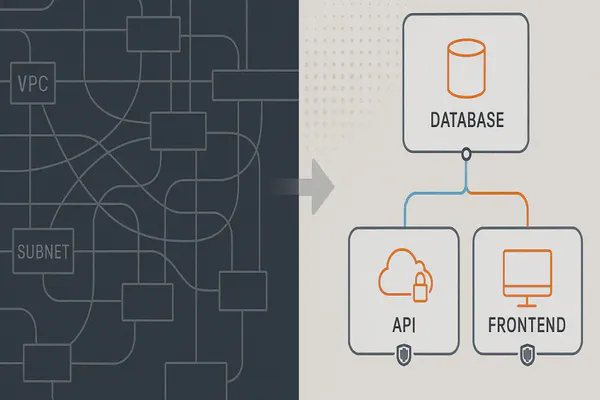

Three Ingestion Paths

AWS now offers three distinct HTTP-based ingestion methods for CloudWatch Logs. This is worth mapping out because the documentation scatters them across different pages:

| Method | Authentication | Agent Required | Complexity | Best For |

|---|---|---|---|---|

| HLC Endpoint | Bearer token | No | Very Low | IoT, on-prem, non-AWS, quick integrations |

| OTLP Endpoint | SigV4 | No (but typically needs OTel Collector) | Medium | OpenTelemetry-native environments |

| PutLogEvents API | SigV4 | No (but needs SDK) | Medium-High | Application code with AWS SDK |

| CloudWatch Agent | IAM | Yes | Medium | EC2, on-prem servers with full agent support |

| Fluent Bit | IAM | Yes (lightweight) | Medium | Kubernetes, ECS, EKS |

The HLC endpoint sits in a category that did not exist before: zero-dependency HTTP log shipping. The OTLP endpoint (at https://logs.<region>.amazonaws.com/v1/logs) also accepts HTTP but still requires SigV4. The HLC path is the only one that trades signing complexity for bearer token simplicity.

How to Set It Up

1. Generate an API Key

Navigate to CloudWatch > Settings > Logs in the AWS Console. Under API Keys, choose Generate API key. This creates an IAM user with the necessary permissions and returns a bearer token (ServiceCredentialSecret).

Store the token immediately. You cannot retrieve it later.

2. Create Your Log Group and Stream

aws logs create-log-group --log-group-name /my-app/hlc-logs

aws logs create-log-stream --log-group-name /my-app/hlc-logs --log-stream-name stream-1

3. Send Logs

curl -X POST \

'https://logs.us-east-1.amazonaws.com/services/collector/event?logGroup=/my-app/hlc-logs&logStream=stream-1' \

-H "Authorization: Bearer $HLC_TOKEN" \

-H "Content-Type: application/json" \

-d '{

"event": [

{

"time": '"$(date +%s)"'.000,

"event": "Deployment completed successfully",

"host": "'"$(hostname)"'",

"severity": "info"

}

]

}'

The payload format uses a JSON array of events, each with time (Unix epoch), event (the log message), host, and severity fields.

4. IAM Permissions (Auto-Created)

The API key generation creates an IAM user with these permissions:

logs:PutLogEventslogs:CallWithBearerToken- KMS permissions if the log group uses encryption

You can scope token expiration using the iam:ServiceSpecificCredentialAgeDays condition key. Do this. Unbounded tokens are a security incident waiting to happen.

Where This Gets Interesting

The obvious use case is IoT. An ESP32 or Raspberry Pi that can make an HTTP POST can now ship logs directly to CloudWatch without going through IoT Core rules or running a local agent. That is a meaningful simplification for fleet telemetry.

But the more interesting use cases are the ones that were previously just annoying enough to not bother with:

On-premises legacy systems. That Java 8 application running on a 2014 RHEL box that nobody wants to touch? It can probably make an HTTP POST. Now it can ship logs to CloudWatch without installing the CloudWatch agent and managing IAM credentials on-prem.

CI/CD pipelines. Adding a curl command to a GitHub Actions step or Jenkins pipeline is trivial. No SDK dependency, no credential helper configuration.

Multi-cloud log centralisation. A GCP Cloud Function or Azure Function that forwards logs to CloudWatch with a single HTTP call. Useful if CloudWatch is your central pane of glass.

Third-party webhooks. Any system that supports configurable webhook destinations can now target CloudWatch directly with bearer auth.

The Security Tradeoff

This simplicity comes with a deliberate security tradeoff that is worth understanding.

SigV4 signs the entire HTTP request: headers, body, timestamp. If someone intercepts a signed request, they cannot modify it without invalidating the signature. Bearer tokens have no such protection. A stolen token can be reused from any source, with any payload, indefinitely (until rotated).

This means:

- Treat HLC tokens like API keys. Vault them. Rotate them. Monitor for leaks.

- Enforce expiration via the

iam:ServiceSpecificCredentialAgeDaysIAM condition. - Scope log groups. A token has access to the log groups its IAM user can write to. Least-privilege still applies.

- Monitor usage. CloudTrail logs

PutLogEventscalls regardless of auth method. Watch for unexpected sources.

For most use cases, this tradeoff is fine. You are shipping logs, not processing payments. But if your logs contain PII or are subject to compliance requirements, evaluate whether bearer token auth meets your control framework.

What to Watch For

The HLC endpoint is still in preview. That means:

- Four US regions only: us-east-1, us-west-1, us-west-2, us-east-2

- No SLA. Do not build production logging pipelines on this today.

- Breaking changes possible. The payload format and endpoint URL could change before GA.

- No ap-southeast-2 yet. For those of us in Australia, we are waiting.

The standard CloudWatch Logs limits still apply: 256 KB per event, 1 MB per batch, 10,000 events per batch, 2,500 TPS per account (5,000 in large regions).

Pricing is identical to standard ingestion: $0.50/GB in us-east-1 for Standard class, $0.25/GB for Infrequent Access. No premium for using the HLC path.

The Bigger Picture

AWS deprecated FluentD support for CloudWatch Logs in February 2025, pushing users toward Fluent Bit. The HLC endpoint represents a different strategy entirely: making CloudWatch accessible without any agent or SDK dependency at all.

This is a centralisation play. Combined with the 30 new Config resource types for tracking service configurations and the STS identity provider claims validation for securing cross-account access, AWS is steadily closing gaps in its observability and security story. If any device on any network can ship logs to CloudWatch with a bearer token and a POST request, the argument for using CloudWatch as your universal log sink gets significantly stronger. That competes directly with Datadog, Splunk, and Elastic for hybrid and multi-cloud log aggregation.

The three-path ingestion model (PutLogEvents for SDK users, OTLP for OpenTelemetry adopters, HLC for everyone else) covers every authentication preference. The question is whether AWS will bring HLC to GA with enough regional coverage to make it practical for production workloads outside the US.

I will be watching the preview closely. If you have IoT, on-prem, or hybrid logging pain points, this is worth experimenting with now so you are ready when it goes GA.