Prototype in Hours, Deploy in Production: n8n to AWS Bedrock AgentCore

- Stephen Jones

- Aws , Ai

- March 18, 2026

Table of Contents

Your team just got the green light to build an AI agent for customer support escalation. The architect says “CDK and AgentCore.” The PM says “show me something by Friday.”

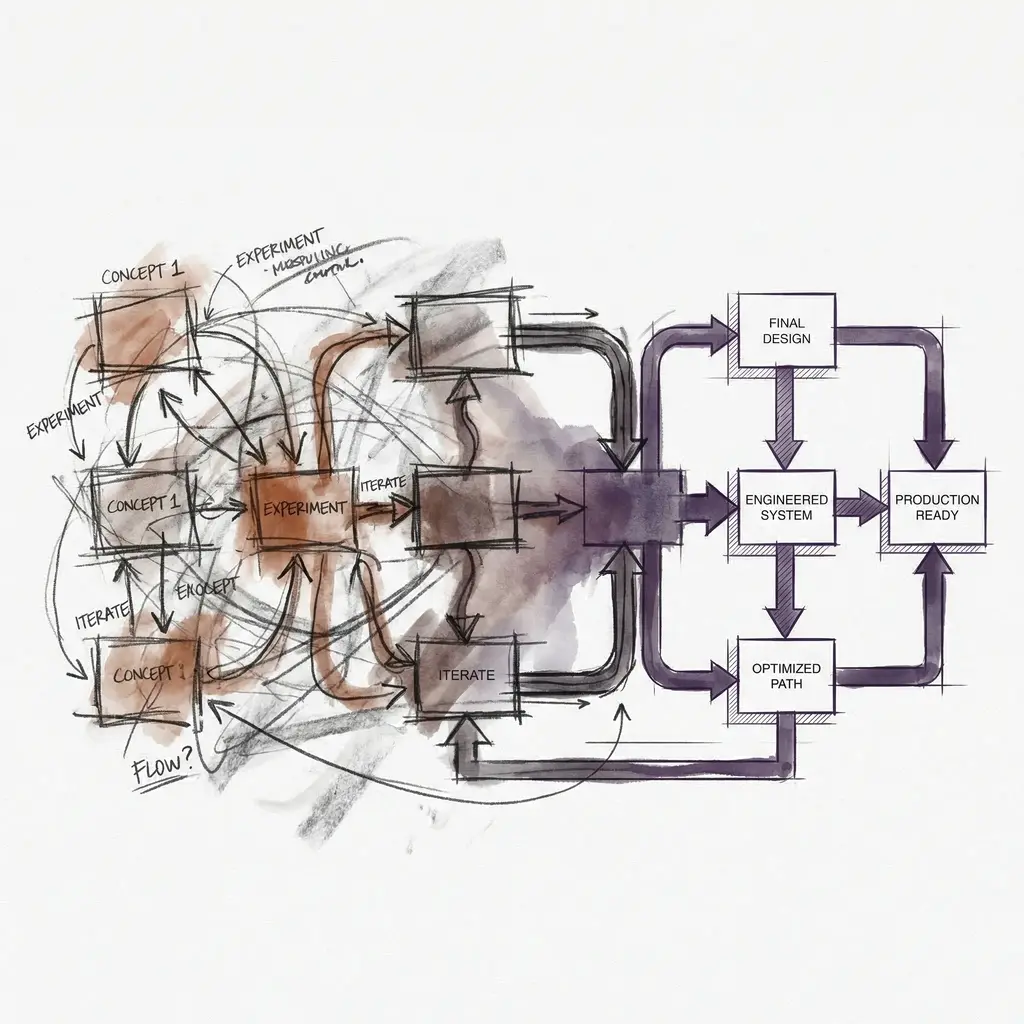

You have two choices. Spend two weeks writing TypeScript CDK stacks, IAM policies, Docker configs, and ECR pipelines before anyone sees the agent think. Or spend two hours in n8n, prove the logic works, then translate to production IaC with confidence.

This post walks through the second option. It is not a shortcut. It is a discipline. And it matters because 88% of AI pilots never reach production. The teams that succeed separate agent logic validation from infrastructure provisioning. They prototype sequentially, not simultaneously.

The POC Tax

AWS Bedrock AgentCore is a powerful platform for deploying production AI agents — I covered the toolkit setup and the policy and evaluation controls in earlier posts. But it has a high minimum viable deployment:

- Runtime needs a containerised agent image pushed to ECR

- Gateway needs OpenAPI specs or Lambda functions defining every tool

- IAM roles need scoping for each service interaction

- CDK stacks need to wire it all together before a single prompt is tested

The result? Teams jump straight to production code because “we’ll need it eventually.” Then they burn three rounds of CDK deploy cycles discovering the agent’s tool-calling logic was wrong from the start. McKinsey’s research suggests models account for only 15% of AI project costs, with integration and operations eating the remaining 85%. Getting the logic right before provisioning infrastructure is not laziness. It is economics.

The Two-Hour POC

n8n’s AI Agent node provides a built-in ReAct loop with tool binding, making it an ideal prototyping surface for agentic workflows. Here is what we are building: an agent that receives support tickets, classifies severity, searches a knowledge base, pulls customer history, and decides whether to draft a response or escalate to a human.

Webhook Trigger (incoming ticket JSON)

|

v

AI Agent Node (Claude / GPT-4)

- System prompt: classification + response drafting instructions

- Tool 1: HTTP Request -> Knowledge Base search API

- Tool 2: HTTP Request -> Customer history CRM endpoint

|

v

IF Node (severity > threshold OR agent confidence < 70%)

| |

v v

YES NO

| |

v v

Slack msg Draft email response

to on-call to review queue

+ Jira

ticket

What You Are Actually Validating

This is not a toy demo. In those two hours, you are testing the things that matter most.

Prompt engineering. The system prompt is the soul of your agent. In n8n, you iterate it 10 or 15 times with real inputs and watch the agent’s reasoning in the execution trace. Each iteration takes seconds, not deploy cycles.

Tool selection logic. Does the agent call the knowledge base search before or after checking customer history? Does it ever skip a tool it should have called? n8n’s execution history shows every tool call, every parameter, every result.

Escalation threshold. Your IF node logic encodes a business rule. You can test 20 different tickets against it in minutes and tune the threshold before writing a single line of CDK.

Edge cases. What happens when the knowledge base returns zero results? When the customer has no history? When the ticket is in a language the agent was not prompted for? You will find these in n8n’s debug panel, not in a CloudWatch log after a production incident.

From Prototype to Production CDK

With the agent logic validated, the CDK stack writes itself. You know exactly what tools the Gateway needs, what memory type works, and what the agent’s behaviour looks like under real inputs. Others have documented this exact migration path, and the pattern is consistent: n8n gives you a structured decomposition of the agent’s needs, not a production blueprint, but a validated spec.

Here is the production skeleton using the @aws-cdk/aws-bedrock-agentcore-alpha module:

import * as cdk from 'aws-cdk-lib';

import * as agentcore from '@aws-cdk/aws-bedrock-agentcore-alpha';

import { Construct } from 'constructs';

export class SupportAgentStack extends cdk.Stack {

constructor(scope: Construct, id: string, props?: cdk.StackProps) {

super(scope, id, props);

// n8n AI Agent Node -> AgentCore Runtime

// Your reasoning loop, now containerised with session isolation

// and 8-hour execution windows. Any framework: Strands, LangGraph, CrewAI.

const runtime = new agentcore.AgentRuntime(this, 'SupportAgent', {

code: agentcore.Code.fromAsset('./agent'),

memoryId: memory.memoryId,

gatewayId: gateway.gatewayId,

});

// n8n HTTP Request Tools -> AgentCore Gateway

// No speculative tool definitions: you know exactly which

// APIs the agent needs because you watched it use them in n8n

const gateway = new agentcore.Gateway(this, 'ToolGateway', {

tools: [

agentcore.Tool.fromOpenApiSpec('./tools/kb-search.yaml'),

agentcore.Tool.fromOpenApiSpec('./tools/customer-history.yaml'),

agentcore.Tool.fromLambda(escalationFn), // n8n IF node -> Lambda

],

});

// n8n Chat Memory -> AgentCore Memory

// Upgrade from n8n's in-session window buffer to persistent

// episodic memory that survives across sessions

const memory = new agentcore.Memory(this, 'AgentMemory', {

type: agentcore.MemoryType.EPISODIC,

});

// No n8n equivalent: AgentCore Policy (preview)

// Real-time interception of every tool call. Enforces boundaries

// like "never expose PII" or "no refunds above $500"

}

}

Note:

@aws-cdk/aws-bedrock-agentcore-alphais an alpha module. The API is subject to change. See the CDK samples repository for current examples.

Where the Map Stops

Here is where intellectual honesty matters. The n8n prototype gives you a structured decomposition of your agent’s needs. It is not a production blueprint. Several critical things do not translate, and pretending otherwise is how prototypes become production incidents.

Orchestration internals. n8n’s ReAct loop hides LangChain defaults behind a visual interface: token budgets, tool selection strategy, memory windowing, retry behaviour. In AgentCore, you own all of that explicitly. That is not translation. It is reimplementation with a validated reference.

Failure modes at scale. Your POC tells you nothing about latency under concurrent load, inference cost at production volume, or how the agent degrades when APIs respond slowly. n8n fails gracefully with a red node in a debug panel. Production agents fail silently, expensively, or both.

Security posture. This is the gap Steve’s audience will care about most. n8n stores credentials in its database, and workflow JSON can leak secrets on export. Your prototype likely ran with broad API keys in a single-process runtime with no isolation. AgentCore’s microVM isolation, IAM scoping, and Policy enforcement exist precisely because agentic workflows make unpredictable tool calls. Never prototype with production credentials or real customer data in n8n. Use synthetic data, scoped sandbox credentials, and mock endpoints for anything touching PII.

Observability. n8n’s execution history is excellent for debugging individual runs. It has no equivalent to CloudWatch dashboards tracking token usage trends, latency percentiles, and error rates across thousands of sessions. Production observability is a separate engineering effort, not a migration step.

The n8n prototype validated the logic. AgentCore adds trust, safety, scale, and observability. Knowing where the boundary sits is what separates a responsible deployment from a lucky one.

When to Skip n8n Entirely

Intellectual honesty also means acknowledging when this approach adds no value:

- You are redeploying a known pattern. If this is your fifth support agent and the architecture is identical, go straight to CDK.

- The agent is trivially simple. A single-tool, single-prompt agent where the CDK boilerplate is the POC.

- You need A2A from day one. AgentCore Runtime supports the Agent-to-Agent (A2A) protocol for multi-agent orchestration. n8n has no equivalent.

- Your workflow touches regulated data. If compliance requires policy enforcement, audit trails, and IAM-scoped access during all testing, including prototyping, then AgentCore’s audit surface is non-negotiable from the start. n8n’s logging model was not built for compliance scrutiny.

- Your team already thinks in CDK. If writing a CDK stack is genuinely faster than learning n8n’s interface, the overhead of a new tool is not justified.

The best CDK stack is one you write after you know what to build. n8n gives you that knowledge in hours. But production demands capabilities that no prototyping tool can simulate: session isolation, persistent memory, real-time policy enforcement, and automated quality evaluations. These are not competing tools. They are sequential phases of responsible agent development.

Prototype fast. Know where the map stops. Deploy with confidence. And once you’re in production, make sure AWS Config is tracking your AgentCore resources — governance visibility is what separates a responsible deployment from a lucky one.